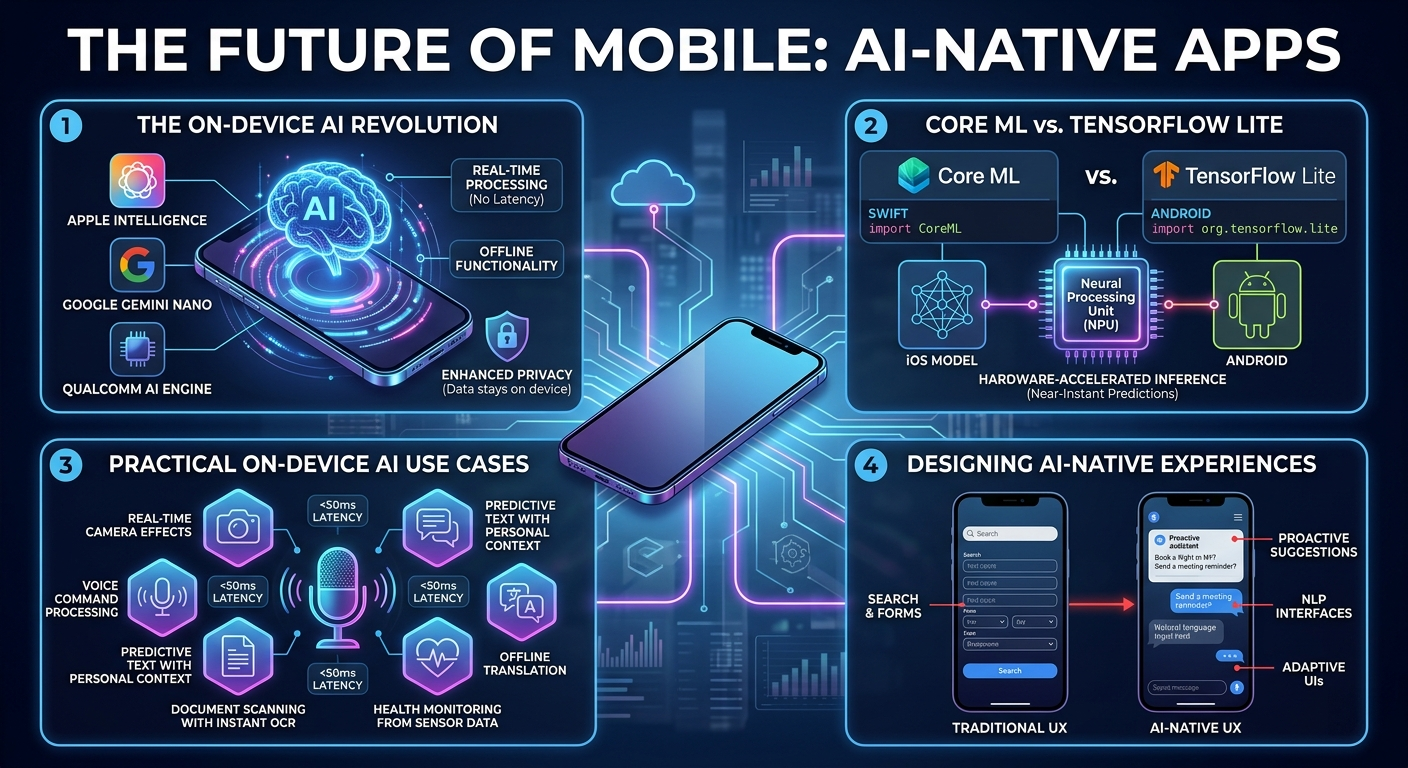

The on-device AI revolution.

With Apple Intelligence, Google Gemini Nano, and Qualcomm AI Engine, mobile devices now run sophisticated AI models locally. This shift enables real-time processing without network latency, offline functionality, and enhanced privacy since data never leaves the device.

Core ML vs TensorFlow Lite.

iOS developers leverage Core ML for seamless model integration with Swift, while Android developers use TensorFlow Lite or ONNX Runtime. Both platforms now support hardware-accelerated inference on neural processing units (NPUs) for near-instant predictions.

Practical on-device AI use cases.

Real-time camera effects, voice command processing, predictive text with personal context, document scanning with instant OCR, health monitoring from sensor data, and offline translation are all now achievable entirely on-device with sub-50ms latency.

Designing AI-native experiences.

AI-native apps do not just add AI features to existing UX — they fundamentally rethink the interaction model. Think proactive suggestions instead of search, natural language interfaces instead of forms, and adaptive UIs that learn from user behaviour.